In any Kubernetes cluster setup that has been in use for a while, many teams will create their own Kubernetes resources. With an array of required and optional parameters, each team will create and configure them as per their specific needs.

At some point, there is bound to be a need for standardisation. Besides, every organisation will have their own governance and legal policies to be enforced.

With that in mind, an open-source, general-purpose policy engine was created. Open Policy Agent (OPA, pronounced “oh-pa”) helps IT administrators unify policy enforcement across the stack.

OPA has a high-level declarative language (Rego) that lets you specify policy as code. This allows us to have simple APIs to decouple policy decision-making from our software.

Let’s see how OPA implementation in Kubernetes is done.

About Gatekeeper

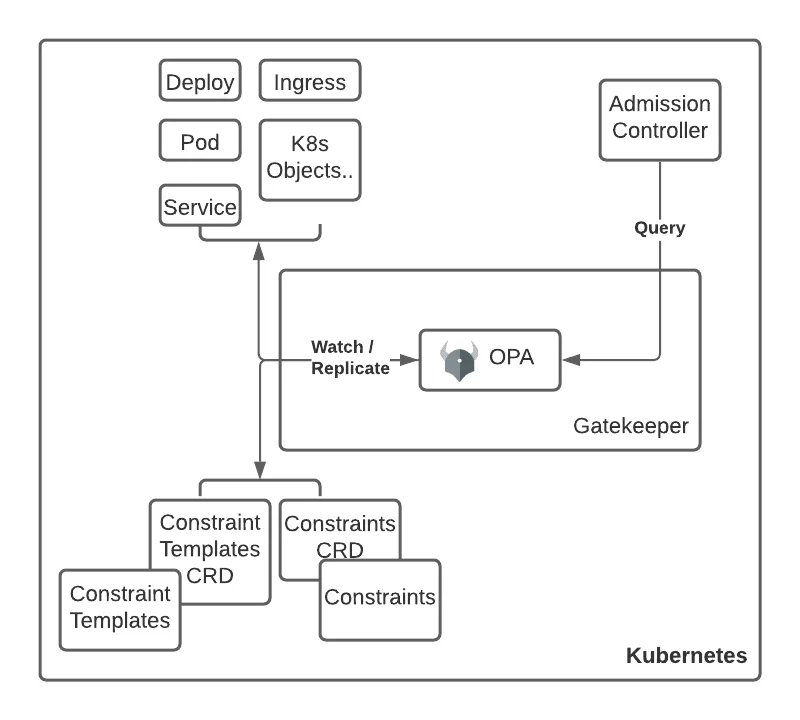

Gatekeeper is a tool that enables you to audit and enforce policies on Kubernetes resources. It is a Kubernetes specific implementation of OPA. It uses OPA Constraint Framework to validate requests to create and update Pods on Kubernetes clusters.

Using Gatekeeper allows administrators to define policies with a constraint. Kubernetes will deny deployment behaviours until those sets of conditions are met. It uses admission controller webhooks to intercept admission requests before they are persisted as objects in Kubernetes.

Setting up Gatekeeper

If you have Helm installed, installing gatekeeper is pretty straight-forward:

$ helm repo add gatekeeper https://open-policy-agent.github.io/gatekeeper/charts

$ helm install gatekeeper/gatekeeper –name-template=gatekeeper –namespace gatekeeper-system –create-namespace

Enforcing policies on Gatekeeper

All policies are written in OPA’s declarative language, Rego. For us to understand how Gatekeeper enforces policies, we need to understand three Custom Resource Definitions (CRDs) made in Kubernetes for this purpose:

- Constraint

- Constraint template

- Config

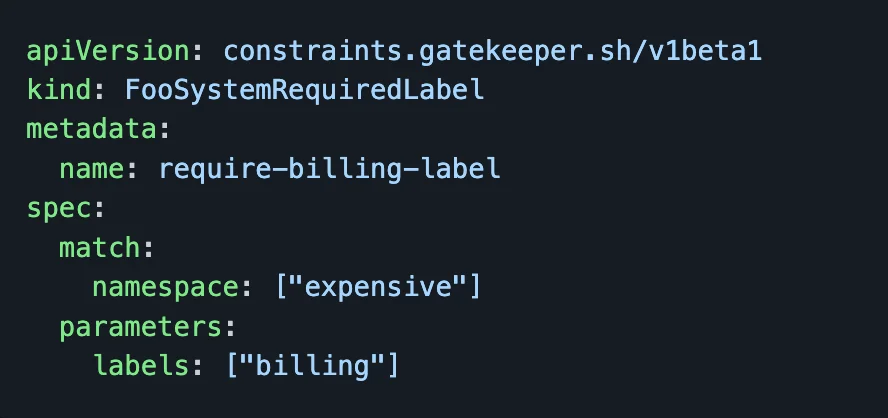

A constraint CRD is a declaration file that specifies the set of requirements that the system must meet. Here is a sample constraint that all objects in the expensive namespace will be required to have a billing label. This Constraint is of the kind FooSystemRequiredLabel. We will use this info in the next step.

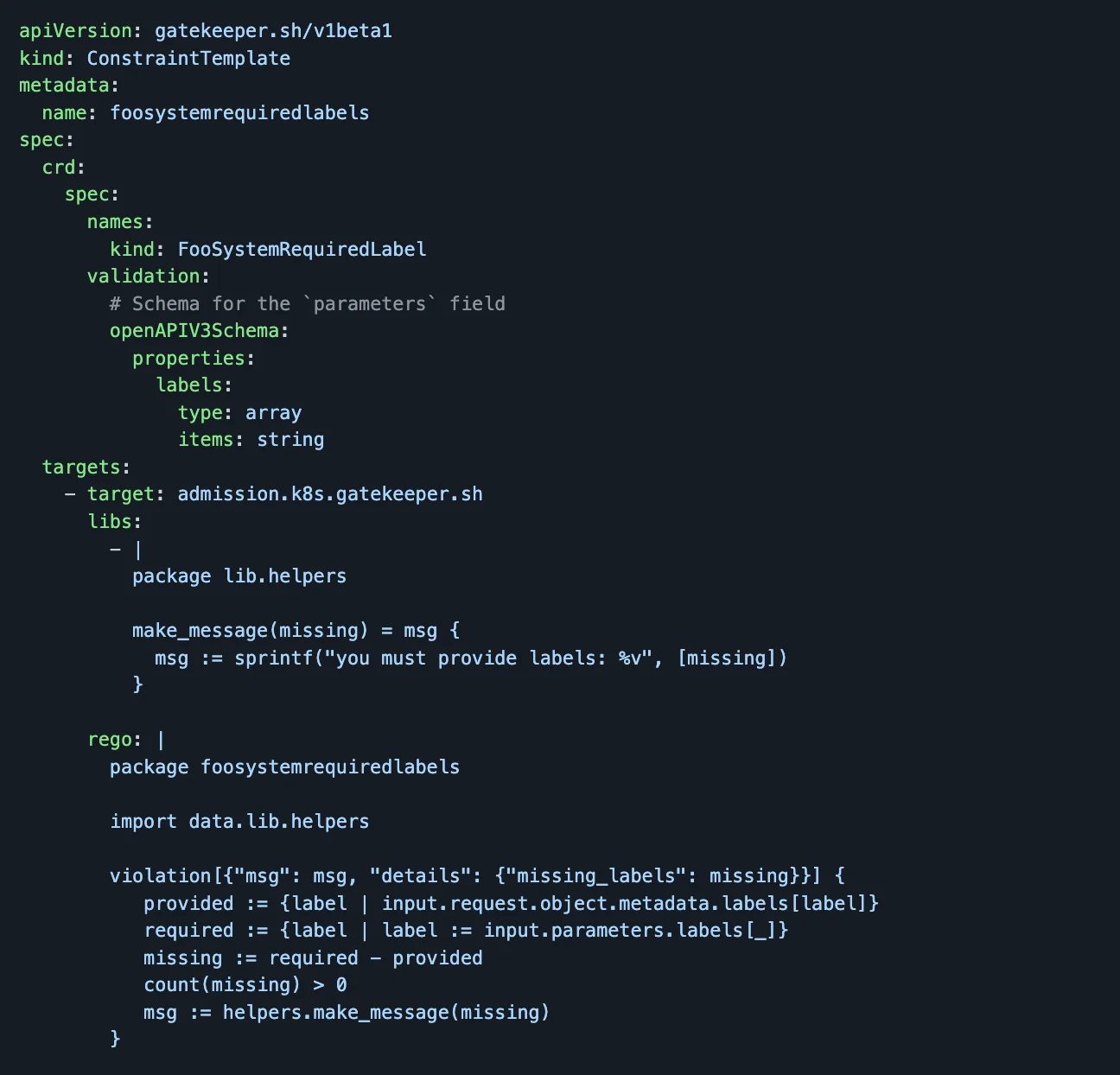

A Constraint Template allows people to declare new constraints. To do that, they provide the expected input parameters and write the Rego code necessary to enforce their intent.

For example, the below code would help in defining the template to use the Constraint kind “FooSystemRequiredLabel” used in the previous example.

The rego semantics for Constraints can be viewed here. We can think of a Constraint Template as defining a function and a Constraint as invoking it.

The last CRD is a Config. Some constraints can’t be written without access to the state of other objects in the infrastructure.

For example, if we want to make sure all the ingress’ hostname in the setup is unique. For this rule to work, we would need access to all other ingresses. We do that by using Config CRD to sync data into the OPA engine.

The config is installed into the OPA by using the kubectl command.

$ kubectl apply -f https://raw.githubusercontent.com/open-policy-agent/gatekeeper/master/demo/basic/sync.yaml

Behind the scenes

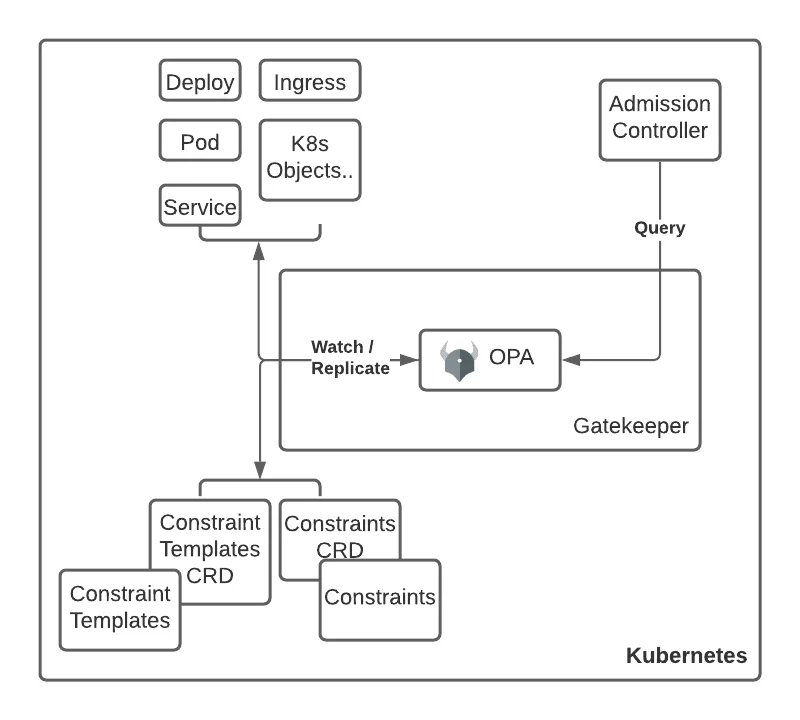

Let’s take the above example and briefly see how the policy will be applied in a Kubernetes cluster with Gatekeeper.

An Admission controller sends all created or updated resources to the Gatekeeper service for evaluation. The ConstraintTemplate from our previous example “foosystemrequiredlabels” is applied to the cluster.

The OPA process inside the Gatekeeper deployment watches the cluster for all ConstraintTemplates.

Once OPA detects the new ConstraintTemplate and if the ConstraintTemplate is valid, a new custom resource is made available in the cluster. It watches the cluster for all Constraints defined in ConstraintTemplates. The Constraint of kind FooSystemRequiredLabel is applied to the cluster.

Once the Constraint is validated, all resources defined in the Constraint scope will be evaluated by the policy defined in the corresponding ConstraintTemplate.

You can pull code for another demo Gatekeeper implementation of constraints, templates and configs here to try it yourself.

Conclusion

Gatekeeper has simplified defining and enforcing of policies in the Kubernetes cluster. With the help of the OPA framework, it has created a rule-based templatized system to help standardize the creation and updation of clusters in a Kubernetes setup. The best part is all this is done without sacrificing development agility and operational independence.