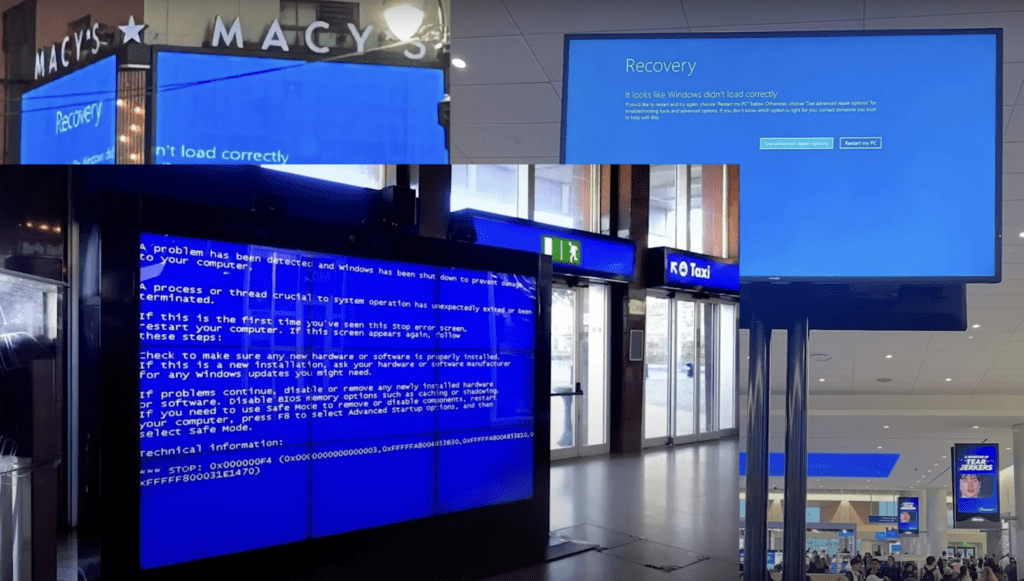

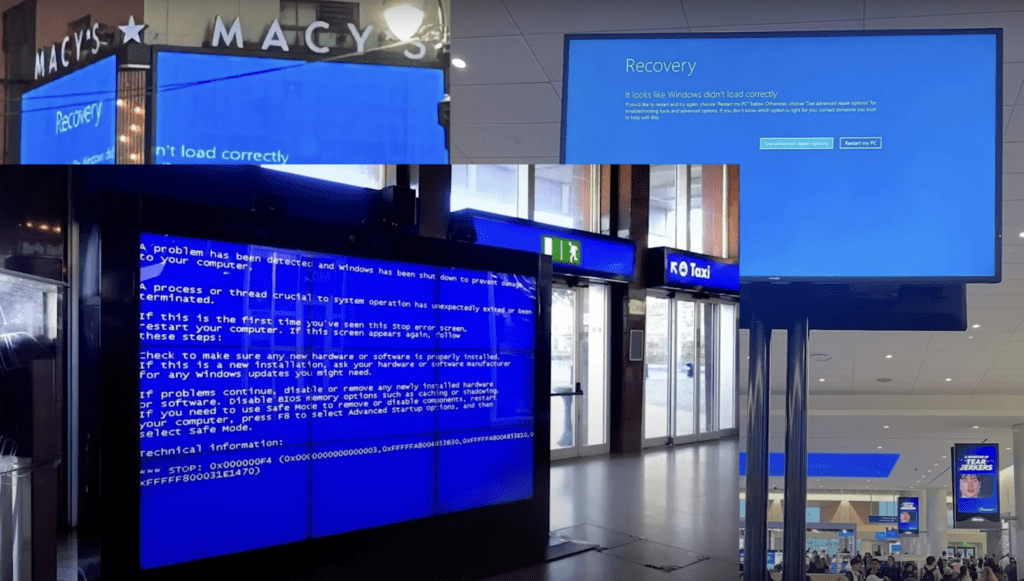

The CrowdStrike Incident: A Global Wake-Up Call for Cloud Resilience

On July 19, 2024, the digital world experienced a seismic shock as a CrowdStrike software update for its software called Falcon Sensor, which scans a computer for intrusions and signs of hacking led to a global outage, affecting countless organizations worldwide. As a Principal Product Evangelist at Taikun, I believe this incident highlights the critical […]

Announcing Our Strategic Partnership with Sardina Systems

We’re thrilled to announce our strategic partnership with Sardina Systems, a leading provider of OpenStack cloud solutions. This collaboration brings together the best of both worlds – Taikun CloudWorks‘ advanced ‘Managed Kubernetes’ and application delivery platform, and Sardina Systems’ FishOS cloud management platform. At Taikun, we’re on a mission to make Kubernetes almost invisible to […]

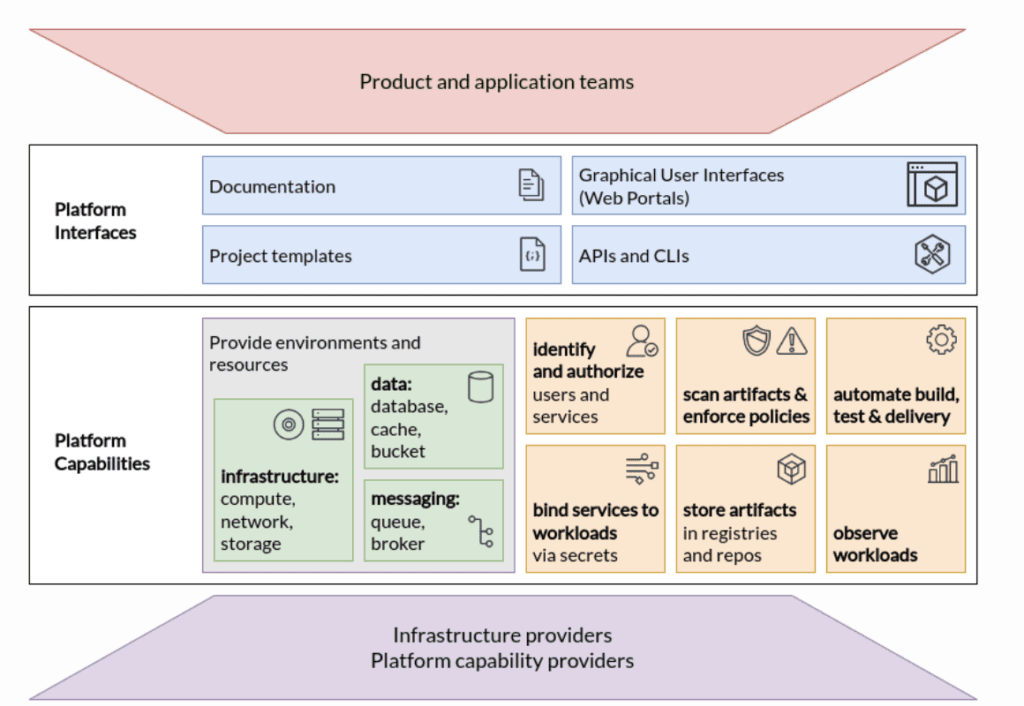

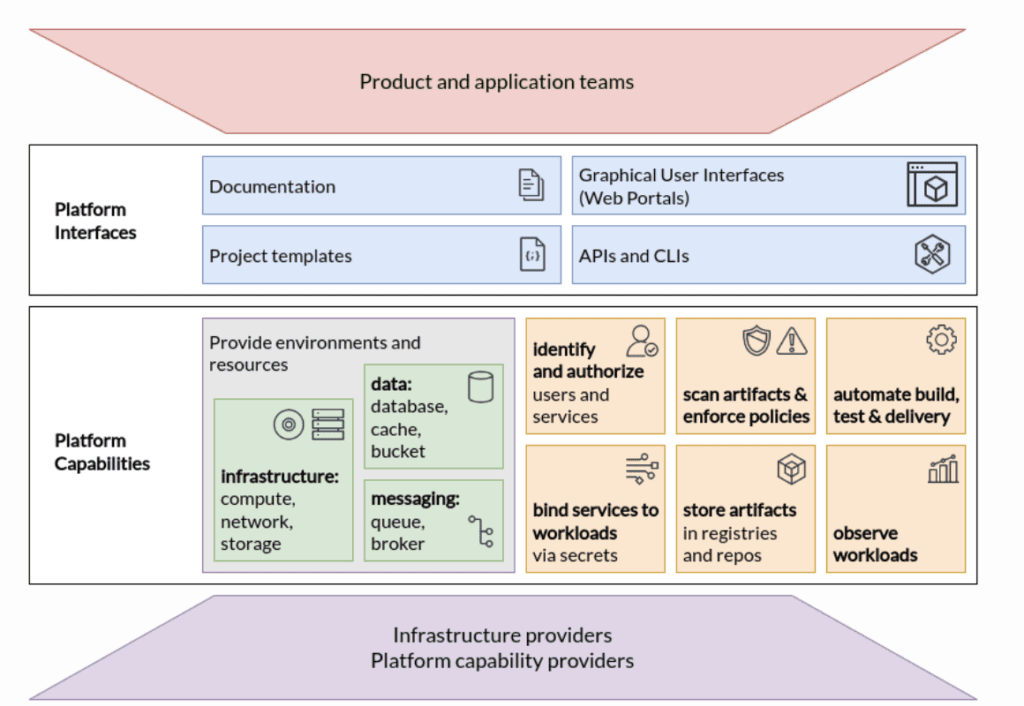

How Kubernetes Powers the Foundation of Platform Engineering for Cloud-Native Development

Platform engineering is an emerging trend, especially in the cloud-native world. According to a recent report by CloudBees, 83% of the surveyed enterprises have made significant progress in adopting Platform Engineering in their development cycles.

Benefits and Challenges of Self-Hosted Kubernetes

Many organizations opt for managed Kubernetes services such as Amazon EKS, Azure AKS, or Google GKE initially to simplify operations and leverage the cloud provider’s expertise. As businesses develop further however the decision of whether to switch from managed services to self-hosted Kubernetes becomes an important one.

How to Expose Deployed Applications in Kubernetes?

Kubernetes has emerged as the de facto container orchestration platform. Its ability to seamlessly manage and scale containerized applications has revolutionized how software is developed, deployed, and managed. According to CNCF’s Annual Survey 2021, 96% of organizations have either implemented or are currently assessing Kubernetes as their container orchestration platform, the highest record since 2016.

Simplifying Kubernetes Management: Eradicating Manual Configurations for Easier Management

Kubernetes has emerged as the de facto standard for coordinating containerized applications as businesses seek more efficiency in the ever-changing software development and deployment world. However, when manually configuring a Kubernetes cluster, the task can become quite daunting. This article will dive deep into this issue and provide actionable advice for streamlining your Kubernetes administration!

How to enforce policies in Kubernetes with Gatekeeper

In any Kubernetes cluster setup that has been in use for a while, many teams will create their own Kubernetes resources. With an array of required and optional parameters, each team will create and configure them as per their specific needs. At some point, there is bound to be a need for standardisation. Besides, every organisation will have their own governance and legal policies to be enforced. With that in mind, an open-source, general-purpose policy engine was created. Open Policy Agent (OPA, pronounced “oh-pa”) helps IT administrators unify policy enforcement across the stack.

What is Kubernetes Stateful Set? – A Guide to Managing Stateful Applications

In today’s increasingly digital environment, data is essential to running any enterprise. Therefore, businesses must ensure their data is secure and readily accessible. Cloud computing has enabled companies to keep their data in a central location and access it from any device at any time. However, stateful apps in the cloud present unique management challenges due to their reliance on persistent storage.

The Pros and Cons of using Kubernetes for Microservices Architecture

Kubernetes has quickly gained traction as a platform for managing microservices architectures due to its capacity to help businesses with containerised applications’ deployment, management, and scalability. Cloud Native Survey indicated that 96% of firms are either actively utilizing or investigating Kubernetes, representing a significant increase from previous surveys.

How to Use Kubernetes for Machine Learning and Data Science Workload

This piece will go into Kubernetes’s strengths and how they can be applied to data science and machine learning projects. We will discuss its fundamental principles and building blocks to help you successfully install and manage machine learning workloads on Kubernetes. More over, this article will give essential insights and practical direction on making the most of this powerful platform, whether you’re just starting with Kubernetes or trying to enhance your machine learning and data science operations.