“Amazon engineers deploy code every 11.7 seconds, on average.” Puppet Labs DevOps report.

The virtualization world has seen a sea change in the last 10 years. For a long time, Virtual Machines ruled the virtualization world. But ever since Docker Engine was launched in 2013, containers have become the go-to virtualization method for developers. Over time, the software development process has now shifted from a blame game of “it-works-on-my-machine” to smooth deployment of software systems performed 1000s of times every day.

We covered what containers are in a previous blog. In this blog, we will understand what a VM is, how containers and virtual machines are different from each other and when one should be used over the other.

Let’s start with understanding what exactly virtualization means.

Virtualization: what and why

Virtualization is a way to create a virtual instance of a computer system by abstracting it at some level. This abstraction could be at the hardware level, operating system level, network level, or application level.

Containers and Virtual machines are both virtualization tools that provide abstraction at different levels. We will talk more about the differences between these in the next section.

There are many benefits of virtualization. Virtualization allows us to experiment faster without acquiring the actual resources. Virtualization also brings a lot of cost-benefit as virtualization costs much less than the actual buying of the resources.

Abstraction from virtualization also helps in porting software across different infrastructures smoothly and allows for fast deployments.

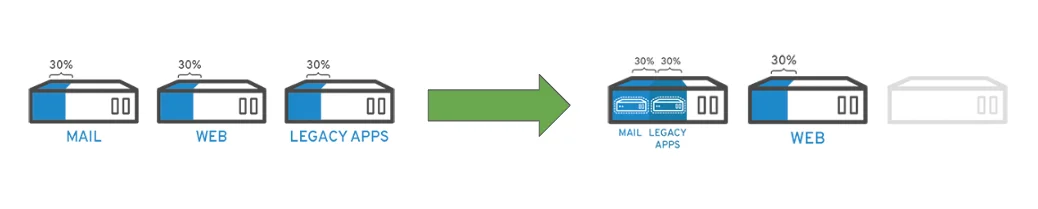

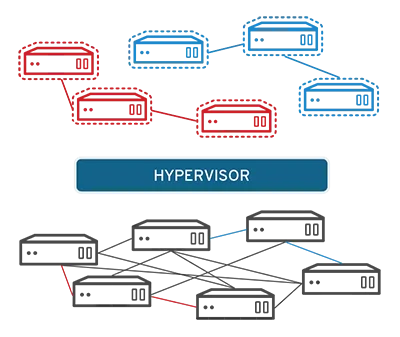

Above is an example of how hardware resources can be conserved with virtualization. Without virtualization, as shown in the example on the left, one server hardware is dedicated for each function. In this case, one server each for Mail, Web, and Legacy Apps.

If each server is utilized at just 30% of its capacity, a lot of expensive hardware is wasted and rarely used.

With virtualization, however, as seen on the right, two web servers are virtualized on the same server hardware. This not only saves on hardware costs but also increases efficiency by making more applications utilize the same amount of hardware.

Let’s now understand what Virtual Machines are.

Understanding Virtual Machines

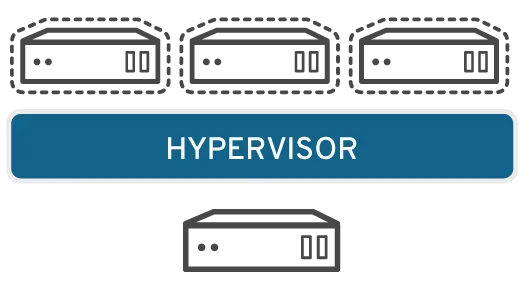

Let’s start with understanding a Virtual machine architecture. There are levels of VIrtualization that a virtual machine can do, but a broad architecture looks as follows:

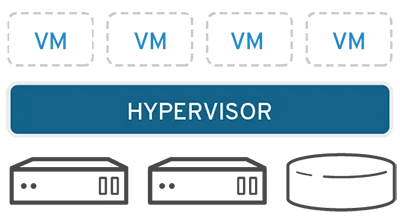

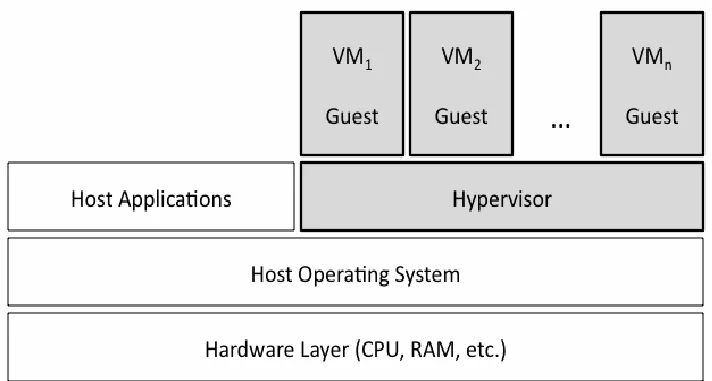

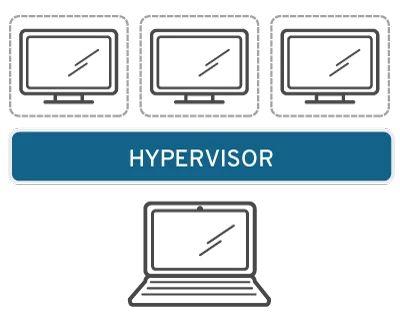

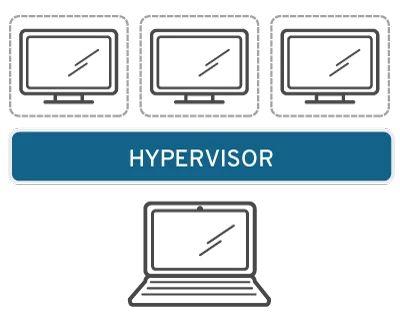

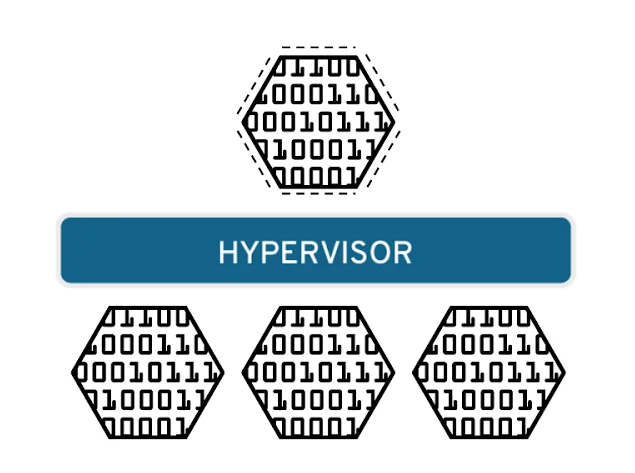

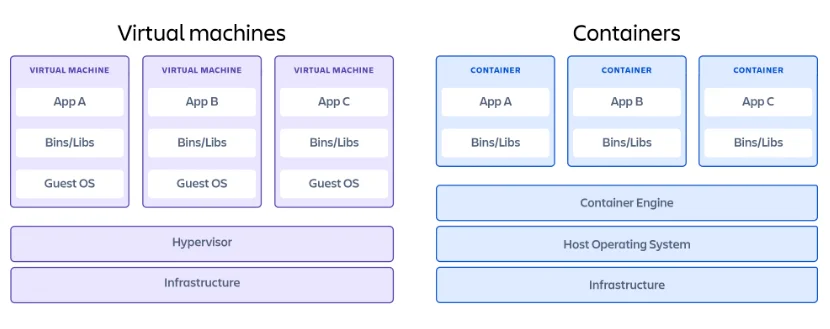

Virtual machines sit on top of software called Hypervisor. A Hypervisor helps separate the underlying layer from VM and create a unified interface for Virtual machines to interact with underlying layers. The underlying can be physical hardware or another operating system.

Type 1 Hypervisor

The first kind of hypervisor sits on top of bare-metal hardware without any operating system to interact with. Such bare-metal hypervisors help Virtual machines interact directly with physical hardware.

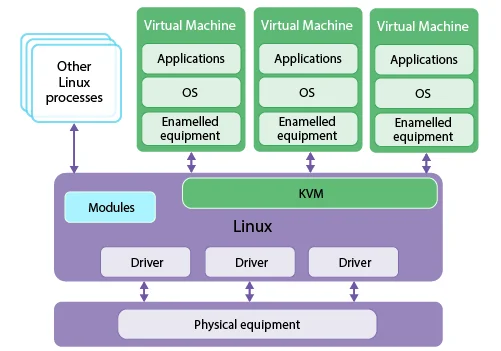

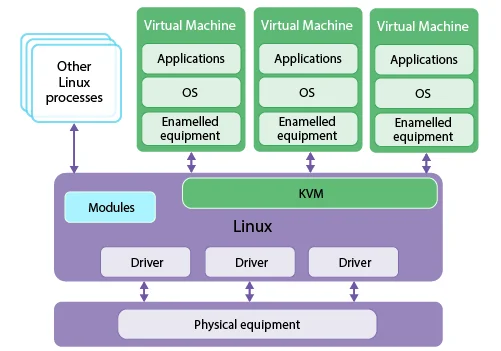

KVM (Kernel-based Virtual machine) is a popular example of a bare-metal Hypervisor. KVM has been a part of the Linux kernel since 2007.

KVM and other bare-metal Hypervisor help in increasing the stability of the VM as there is no OS layer in between. VMs are directly assigned a share of RAM and ROM.

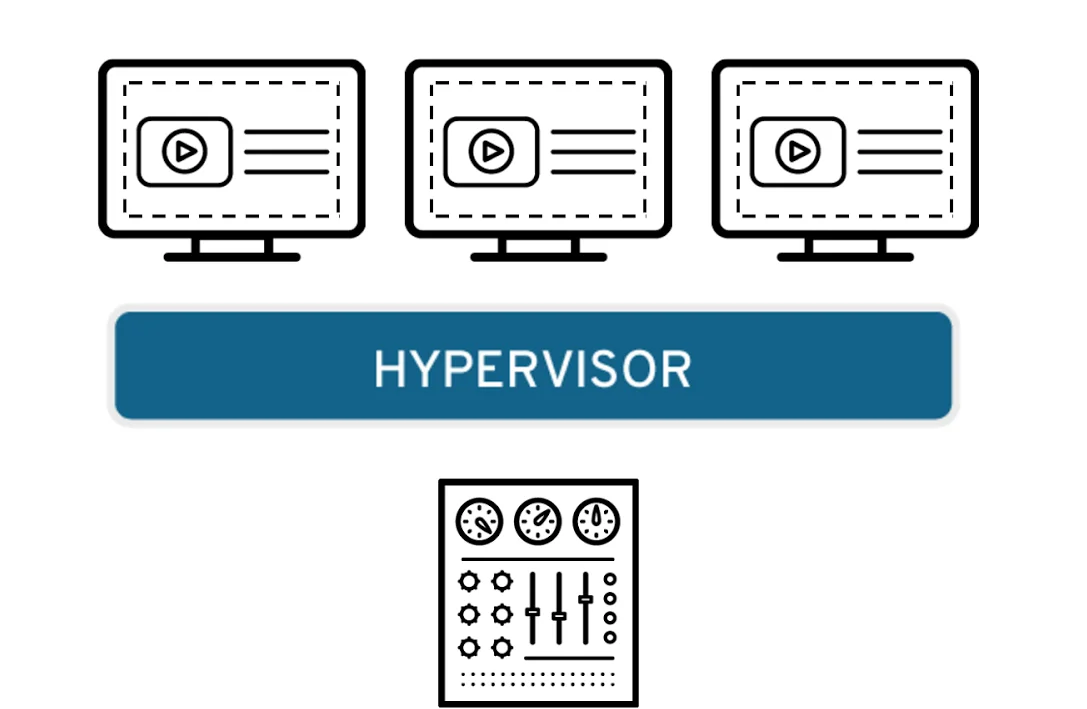

Type 2 Hypervisor

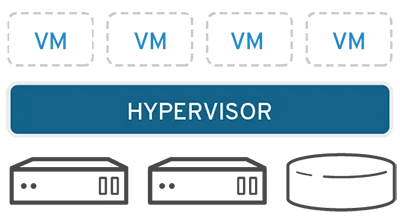

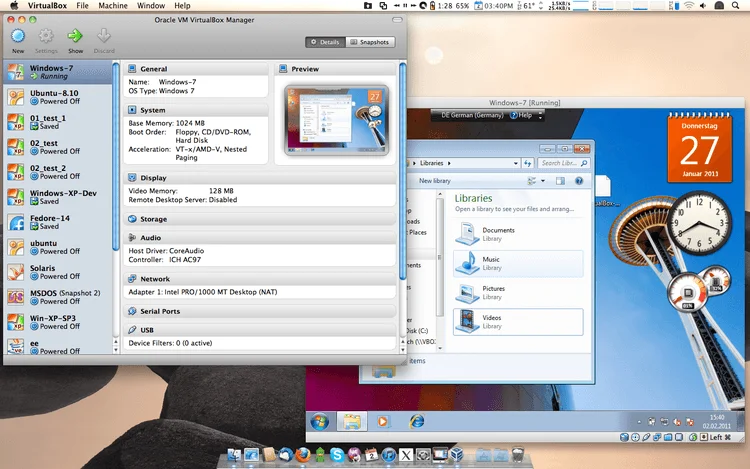

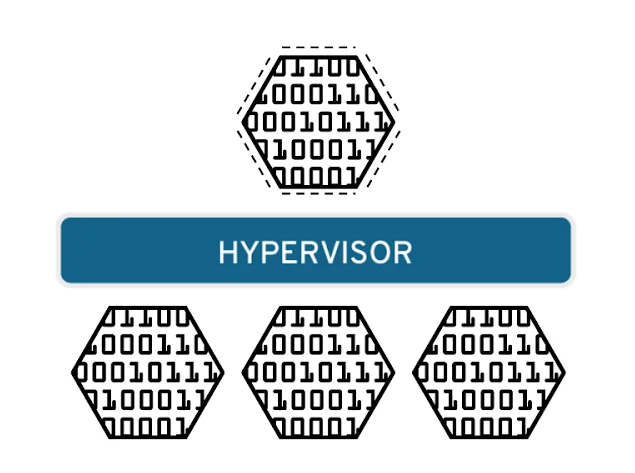

The second type of Hypervisors is where the tool sits over a Host OS. Such hypervisors are abstracting over the OS, and the architecture of such systems look as follows:

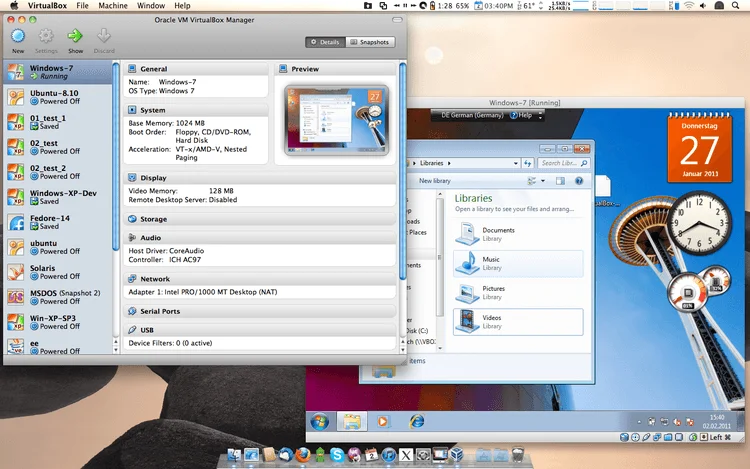

Examples of type 2 hypervisors are Oracle’s VirtualBox and VMware Workstation. Irrespective of the kind of hypervisor being used, the VMs will always have a Guest OS within them.

Types of Virtualization in VMs

There are different types of virtualization that are possible in VMs. Let’s have a look at them briefly.

Operating system virtualization

Operating system virtualization is what type 1 hypervisor helps VMs to achieve. The hypervisor sits on top of physical hardware, and the guest OSes in VMs work directly with the hardware via the hypervisor.

This virtualization happens at the kernel level.

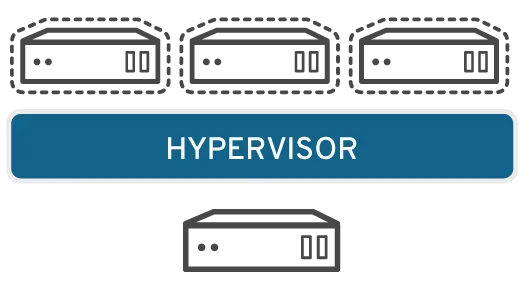

Server virtualization

This style of virtualization is performed by partitioning the hardware, and each virtual server acts like a unique device. This helps in better utilization of server resources and improves the efficiency of the computing environment while isolating each server for better security.

Desktop virtualization

Desktop virtualization is at an Operating system’s user management level. It allows the central administrators to provide users with virtual desktop environments. This helps the system administrators to avoid the cost of buying hundreds of physical machines.

It also helps companies to quickly scale up or scale down, and apply mass configurations and updates seamlessly. With central control, this virtualization also helps provide better security in the infrastructure.

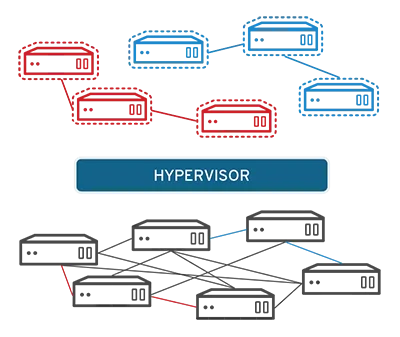

Network virtualization

Network virtualization allows infrastructure teams to separate network functions from the physical infrastructure. Such abstractions help in creating sub-networks easily and segregating any network functions like file sharing, directory services, and IP configuration.

Such virtualization would help in reducing the number of network components like switches, routers, hubs, etc.

Data virtualization

Such virtualizations provide an abstraction over distributed data sources. The VMs on top of the hypervisor see it as a single data source.

Data virtualization allows easier transformation of data by the VMs since the variety of data sources is abstracted as a single source. It also allows quicker addition of new data sources.

Public cloud providers offer many of these virtualizations for VMs. Let’s have a look at the most popular public cloud providers and their VM offerings.

Virtual machines in Cloud

Amazon’s AWS offers EC2 as a cloud offering to create the VM of our choice. Amazon Elastic Compute Cloud, also known as EC2, is a web service that allows you to create and manage virtual machines on Amazon’s cloud.

Similar to Amazon’s EC2, Google offers Compute Engine web service to create and manage virtual machines on the Google cloud.

Microsoft’s Azure also has VM offerings of its own. Like others, they offer both Linux and Windows VMs.

Let us now see how these VMs differ from the containers.

Understanding Containers

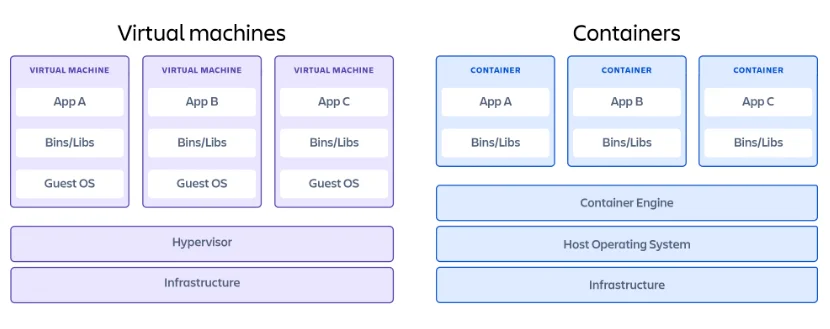

Like VMs, containers are also virtualization technology. The main difference is that, unlike VMs, which have a Guest OS within them, containers are lightweight and do not have an OS inside them.

The way containers are able to do this is because of software called Container Engine. Container Engine provides the abstraction between the Host OS and the container. We wrote about containers in great detail here.

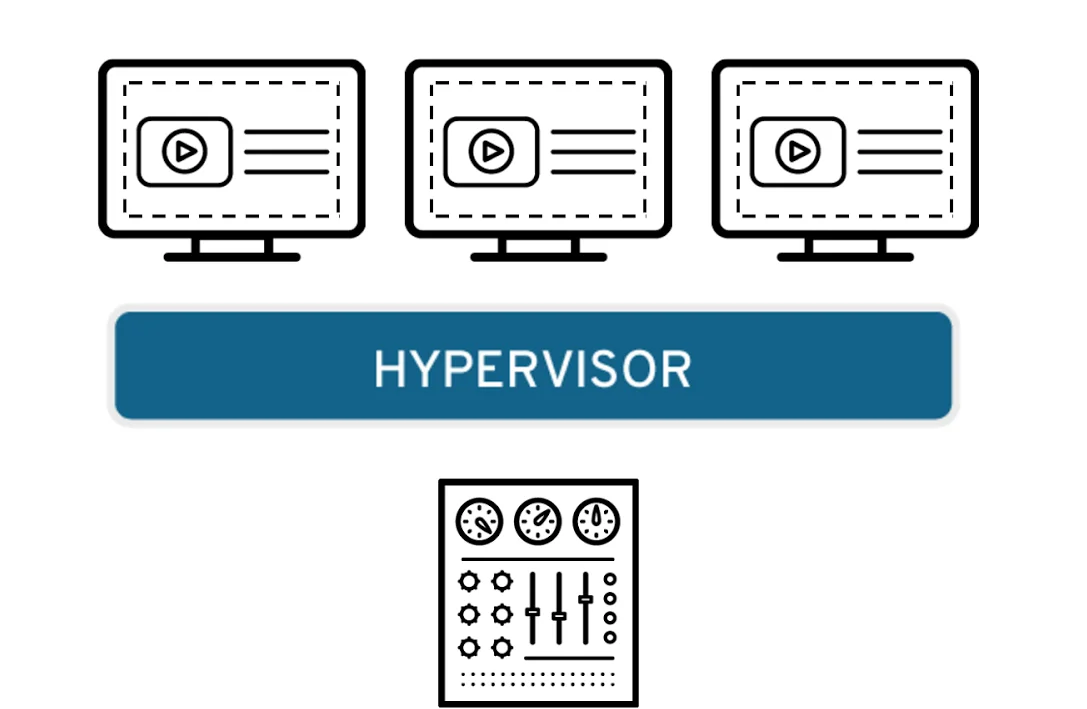

Containers being lightweight also helps in hosting microservices that can scale quickly and serve traffic spikes. This is a huge advantage in many online applications like say, the online streaming platforms, where traffic spikes can be more than 100X the regular traffic.

With such a scale, there is also a need to manage containers better since the number of containers can quickly grow and reduce. This is where tools like Kubernetes come into the picture. Kubernetes helps manage a huge network of containers easily. This is called Container Orchestration.

When to use VMs and containers

With such differences, a question arises on when to use which virtualization. Are VMs now obsolete? No. Although a lot of use cases are better served with lightweight containers, there are instances where VMs are a better choice.

VM provides certain distinct advantages over containers:

- Security

Since the virtual machines are isolated, sometimes from the hardware level, it can prevent many security leaks.

- Backup and Rollback

Virtual machines are easier to take snapshots of, and that makes it easy to back up and restore VM to a certain point in the past.

So, whenever there is a need for better security and control over virtualization, VMs are a better option.

Containers provide their own advantages:

- Lightweight

With no OS within them, containers are much lighter than VMs and hence more portable and easily deployable. This also helps scale up containers faster.

Since containers take fewer resources, virtualization with containers is more efficient.

- Portable

The container engine abstracts the application on it from the underlying Host OS.

This makes it easy to port and deploy applications across environments. Developers and system admins do not have to worry about the dreaded “it-works-on-my-machine” reasoning.

In summary, you should default to containers as it would fit most of your virtualization needs. VMs will serve some of the special cases where greater security and control are more important than efficiency and lightweighted-ness.

Taikun – a game changer for containers

Taikun is a cloud container management tool that helps you have a centralized dashboard for containers running across public cloud infrastructures like AWS, Azure, Google Cloud, or OpenStack.

Taikun can be installed on-premise or can be used as a cloud service via taikun.cloud. It uses the globally recognized CNCF-certified Kubernetes for container orchestration. This helps you standardize your containerized infrastructure, which makes it easy to port across cloud infrastructures.

Taikun works with private, public and hybrid clouds.