“By 2026, more than 90% of global organizations will be running containerized applications in production, which is a significant increase from fewer than 40% in 2020.” – Gartner

After all, what are containers? Containers are self-sufficient software packages that can run the service being agnostic to the underlying environment. It would contain everything from binaries to dependent libraries to configuration files. This makes containers easy to port. Since containers do not have operating system images, they are lightweight compared to Virtual Machines.

In this blog, we will get into details about what containers are and everything you need to know to get started with containers. You can read our entire series on Containers starting with this blog.

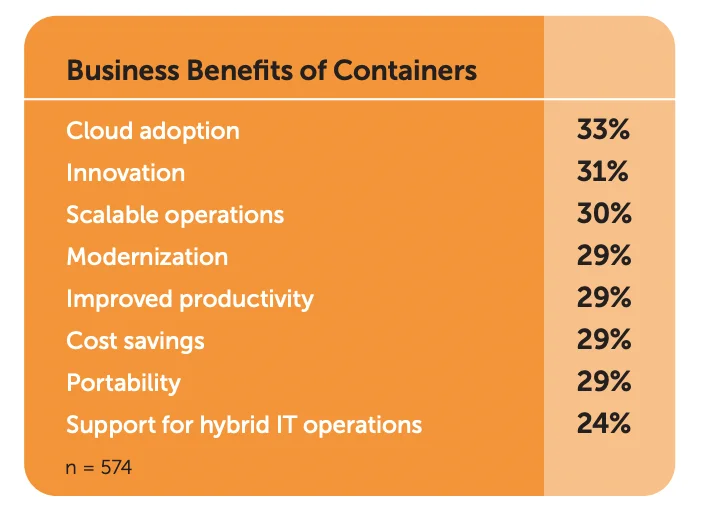

Why containers and what are the benefits of containers?

There are many benefits of using containers in the software development cycle – both in terms of business and technology. Here are some of the most important technology benefits:

- Lightweight

Containers require lesser system resources since they are relatively lighter than Virtual Machines. They do not include operating system images, which makes them easier to work with.

- Portability

Since containers are environment-agnostic and lightweight, they are incredibly portable. This makes it easy to port them between development environments to production. Cloud migration also becomes easy because of it.

- Predictability

Containers solve one of the key issues of dependency management across multiple environment migrations. This helps development and DevOps teams to have more predictable deployments and remove most of the deployment errors.

- Efficiency

With Containerization, it becomes easy for technology teams to create auto-scaling infrastructure to meet the business demands better. This means less allocation of resources as backup and better utilization of system resources.

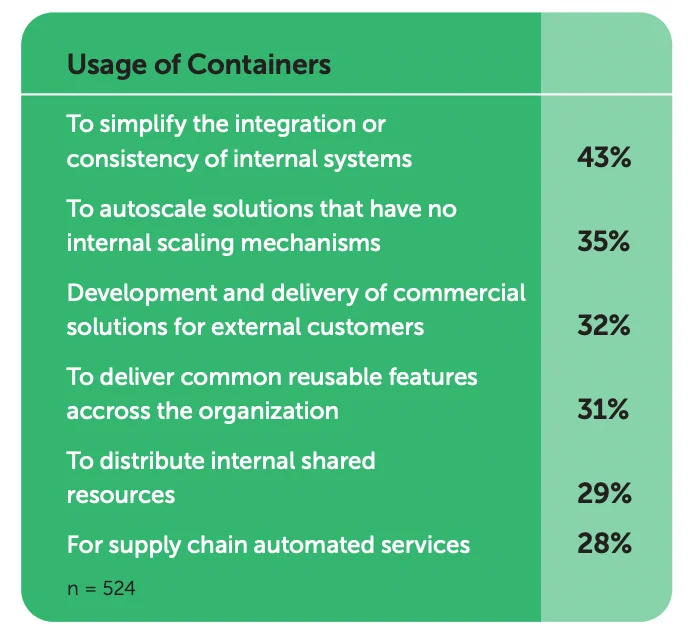

Common Container use cases what are containers used for

There are many use cases for Containers that are already very popular. RedHat’s 2021 survey showed interesting results:

Although the specific use cases will change depending on a variety of factors, in a general sense, the use cases can be divided into a few common categories:

- “Lift and shift” applications to the cloud

Many of the container use cases are related to moving existing applications from legacy setups to modern cloud architectures. The abstraction over the environment helps in making such transitions.

For many organizations, this is the first step to Cloud adoption. Over time, these applications are then containerized further by making them more modular and micro-service based. This is exactly the second category of container use cases.

- Refactoring existing applications

As mentioned, by dividing monolithic applications into containers that run microservices, the full application starts acquiring better scalability capabilities and resource efficiency.

This is because, oftentimes, there is a need for only a few microservices in an application to handle traffic spikes. When separate containers are kept for each microservice, they can be individually scaled to handle the traffic.

This glides into the third category of container use cases: Supporting microservices-based architecture.

- Support for microservices-based architecture

Containers are lightweight and easily replicable. This helps in easily implementing them in a microservices-based setup. In fact, containers are one of the primary reasons that make a microservices-based architecture more scalable.

Distributed architecture is better implemented in a container-based setup.

- Support CI/CD pipelines

Containers being inherently portable help implement CI/CD pipelines better. Many CI/CD workflows involve auto-deployments which is extremely easy to implement with Containers.

Docker – beginning of a container revolution

Docker initiated the first widespread adoption of Containers. It was started in 2013 as an open-source project called Docker Engine.

Docker Engine helped create an abstraction between the infrastructure and the application dependencies. This allowed developers and operations teams about solving dependency issues and focus on building functionalities.

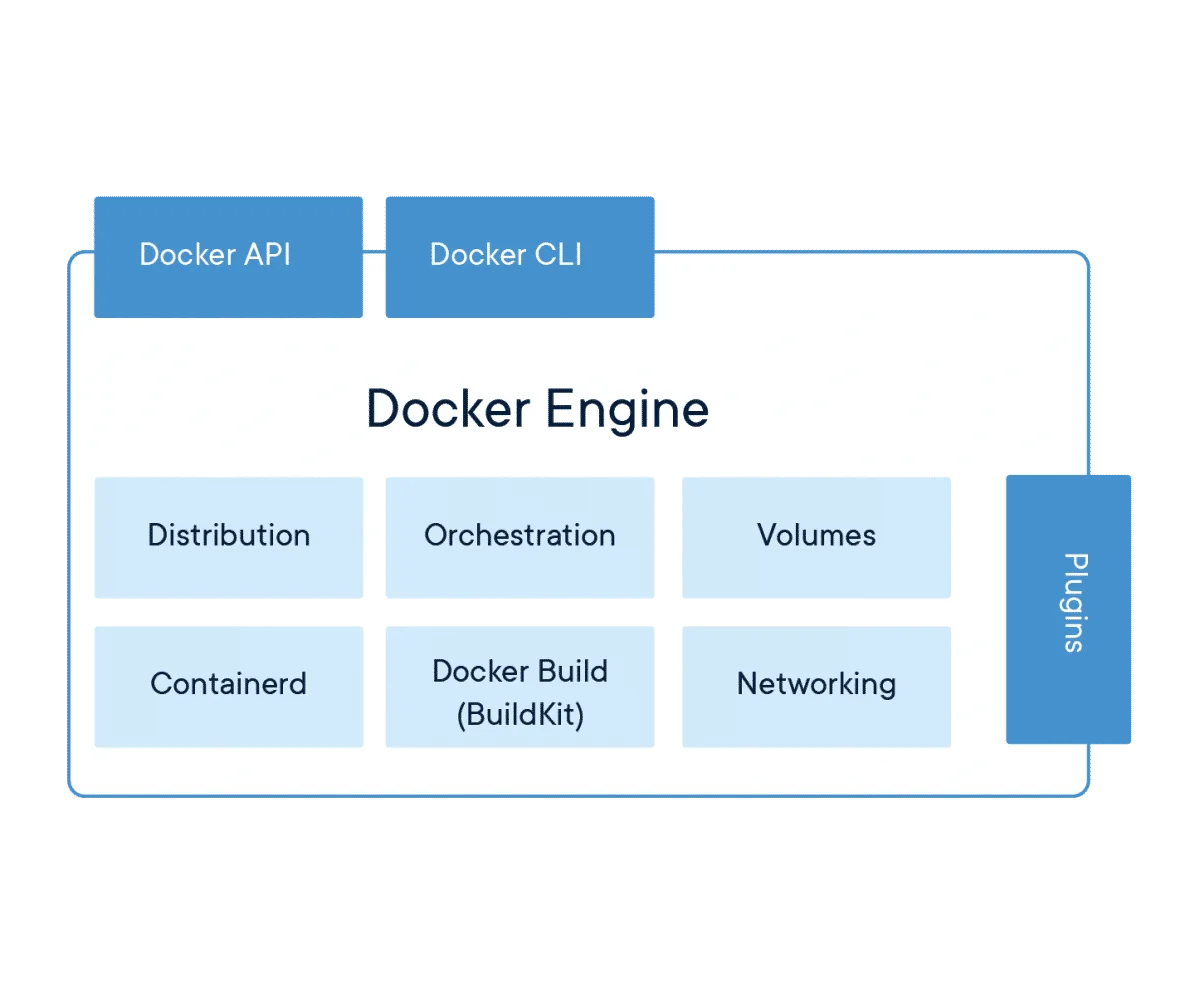

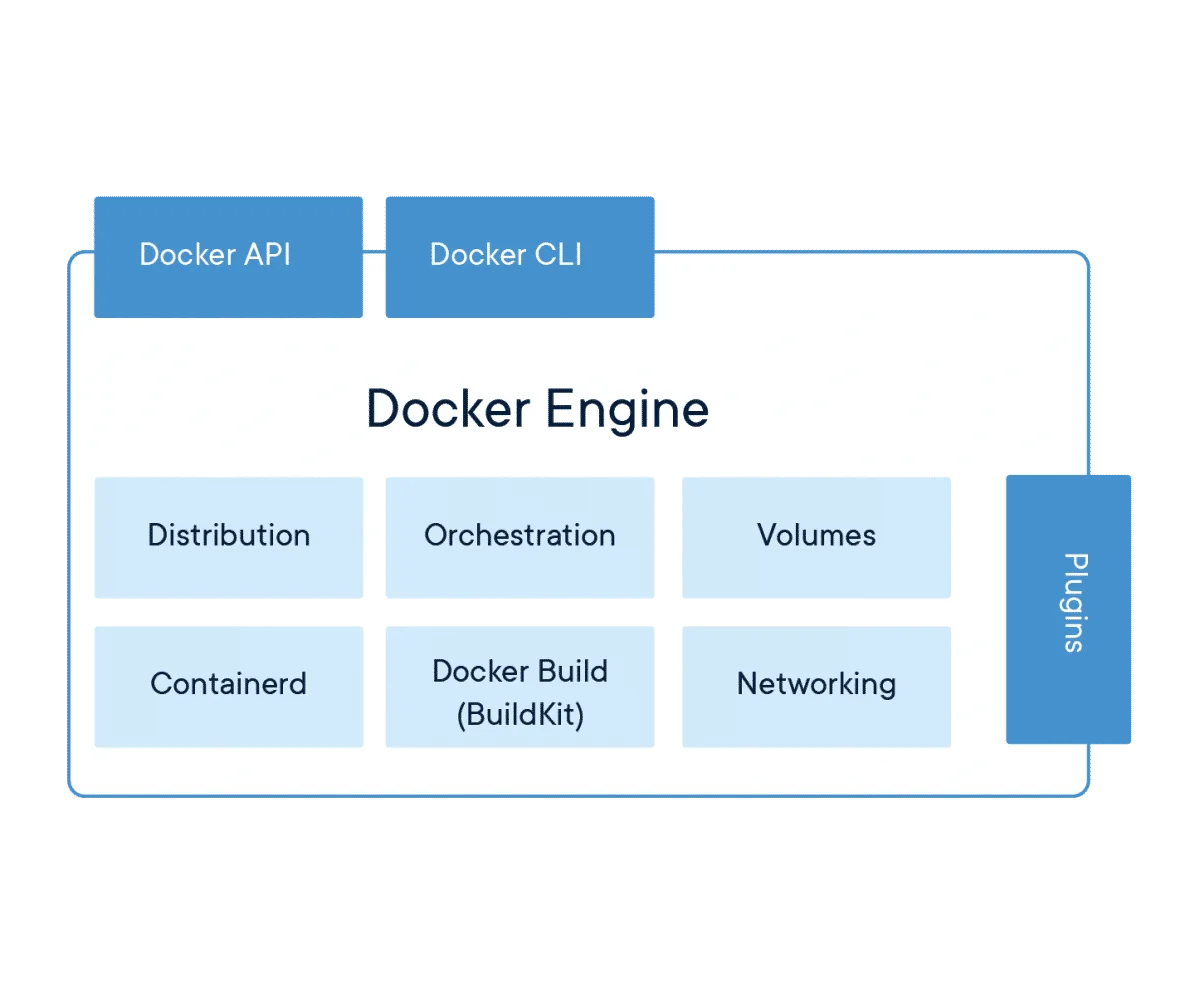

Docker Engine

Docker Engine basically enabled containerized applications to run anywhere irrespective of the underlying operating system. Docker bundles all application dependencies and packages the application into a container that is then run on Docker Engine.

Docker Engine has many components which help it abstract the underlying infrastructure. Of these, Docker Build or BuildKit takes instructions from an instructions file called the Docker file and builds the Docker image. Docker image is the package that becomes a container when it is run by the container runtime.

Containerd is the main daemon that manages the entire container lifecycle from image transfer to storage to networking and command executions.

It manages the complete container lifecycle of its host system, from image transfer and storage to container execution and supervision to low-level storage to network attachments and beyond.

We cover more on Docker in this blog.

Container Orchestration & Kubernetes

As the number of containers increases in an infrastructure, there is a need to create, manage and destroy containers as and when the need arises. This management of containers is called Container Orchestration.

Every major deployment using containers will have some form of container orchestration to deploy, manage, scale and network the containers together. Kubernetes is one of the most popular tools for Container Orchestration.

The smallest unit in Kubernetes is a pod which is a collection of one or more containers. A set of pods is called a Kubernetes cluster. Kubernetes have extensive controls to manage these clusters.

We cover Kubernetes in detail in this blog.

Other popular container platforms

Docker is not the only container platform available out there. Here are some of the other major players in the Container market. All players offer container orchestration as part of their platform.

Amazon Elastic Container Service (ECS)

Amazon Elastic Container Service (ECS) is a service that allows containers to run on Amazon EC2 instances. It has all the traditional container orchestration capabilities similar to Kubernetes. The service, however, is limited to AWS infrastructure and cannot manage other cloud containers.

Google Kubernetes Engine (GKE)

Google Kubernetes Engine (GKE) uses Google Cloud infrastructure to deploy, scale and manage containers and their applications. Google runs Kubernetes clusters on their Compute Engine instances. All the Kubernetes commands and tools work with GKE.

Google Cloud also has pre-built Kubernetes application and deployment templates to quickly deploy containerized solutions on GKE. Google Cloud Marketplace has a wide variety of readymade application templates and container images to be deployed on GKE.

Microsoft Azure Container Instances

Microsoft’s Azure platform has Azure Container Instances (ACI) that enable developers to deploy containers to the Azure cloud. Azure Container Registry has many pre-configured container images for readymade use. In fact, ACI even allows images from public container registries like Docker Hub.

ACI has capabilities of container orchestration. So, the users can simply launch the containers and let Azure automatically handle deployment, scaling and management of the containers.

Redhat OpenShift Container Platform

RedHat OpenShift Container Platform allows private or public management of containers using Kubernetes and Docker. This is the only container management platform that can be setup entirely on a private cloud.

Cloud services like IBM Cloud, AWS provide RedHat OpenShift container platform as a service on their public cloud infrastructure.

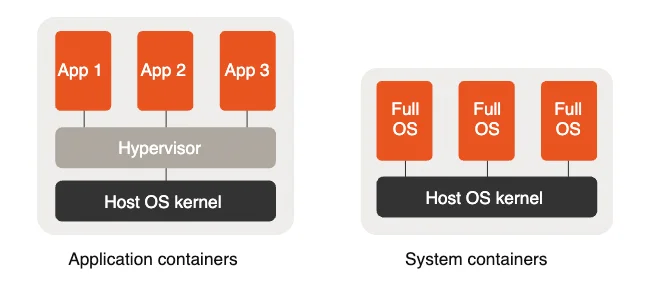

LXD – Linux System Container

LXD is a Linux Container that virtualizes software at the operating system level within the Linux kernel. LXD is an extension of previously known LXC. LXD internally uses LXC for running system containers.

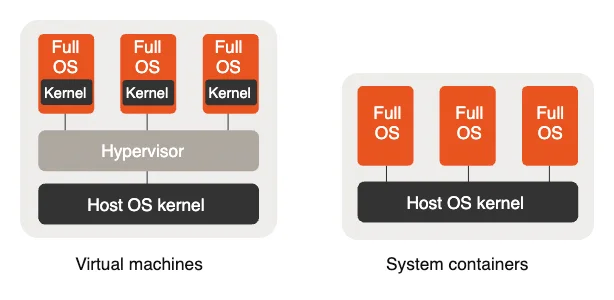

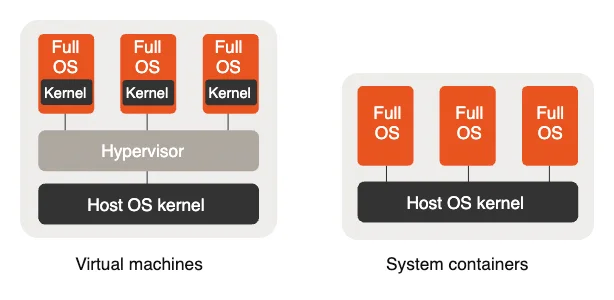

LXD is different from a regular application container where the virtualization happens between the application and the operating system. In LXD, the entire operating system is virtualized and unlike regular hypervisors, it can run a single application within it.

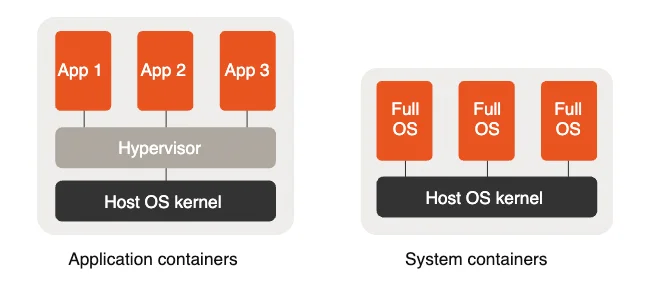

Application containers help virtualize applications and separate components for each functionality, while system containers allow the developer to create different user spaces within the container.

The LXD system also differs from a regular VM since the containers will not have a separate kernel to boot from. System containers share the same host kernel. This is useful when the functionalities required by the system containers is provided by the host kernel.

CNCF – Cloud Native Computing Foundation

With so many different types of containers and container orchestration tools, it can become extremely difficult to maintain interoperability and consistency between container technologies.

CNCF is a multi-industry collaborative effort to bring standardization in the container industry. CNCF-certified container technology will always follow certain guidelines and will be interoperable with other container technologies.

CNCF is part of the Linux Foundation and has played a pivotal role in the accelerated growth of container adoption.

If you are new to using container technology, it is advisable to use CNCF-certified tools and offerings to ensure consistency in performance and interoperability.

Taikun – Container management simplified

Taikun is a CNCF-certified Kubernetes-based container management tool that can fulfill your container needs very easily. Itera, the company behind Taikun, is a Silver member of the Cloud Native Computing Foundation to help you standardize your containerized infrastructure.

Taikun can work with Google Cloud, Amazon Cloud, Azure, and OpenStack and give you a singular dashboard to manage your public, private or hybrid cloud needs effectively.