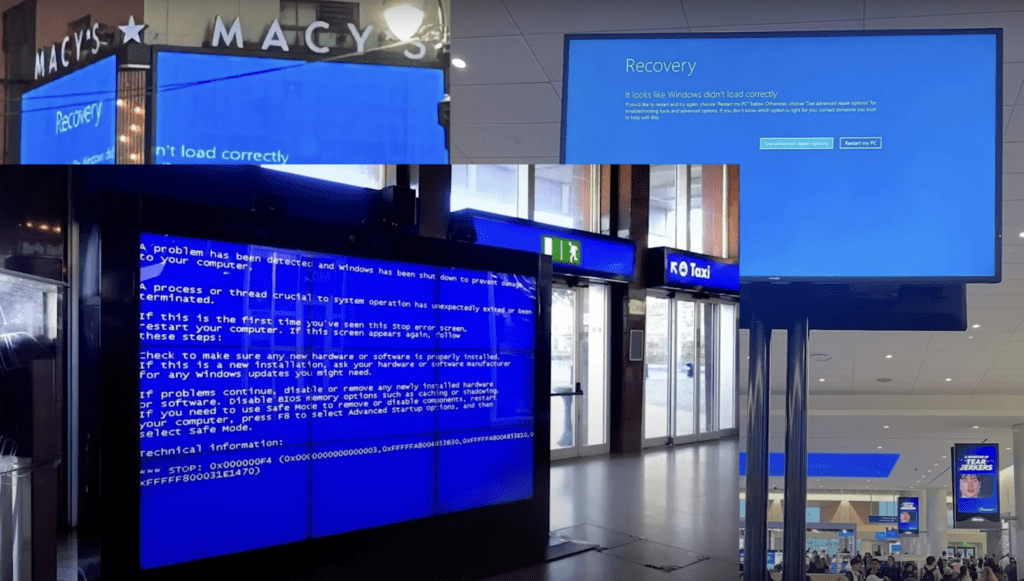

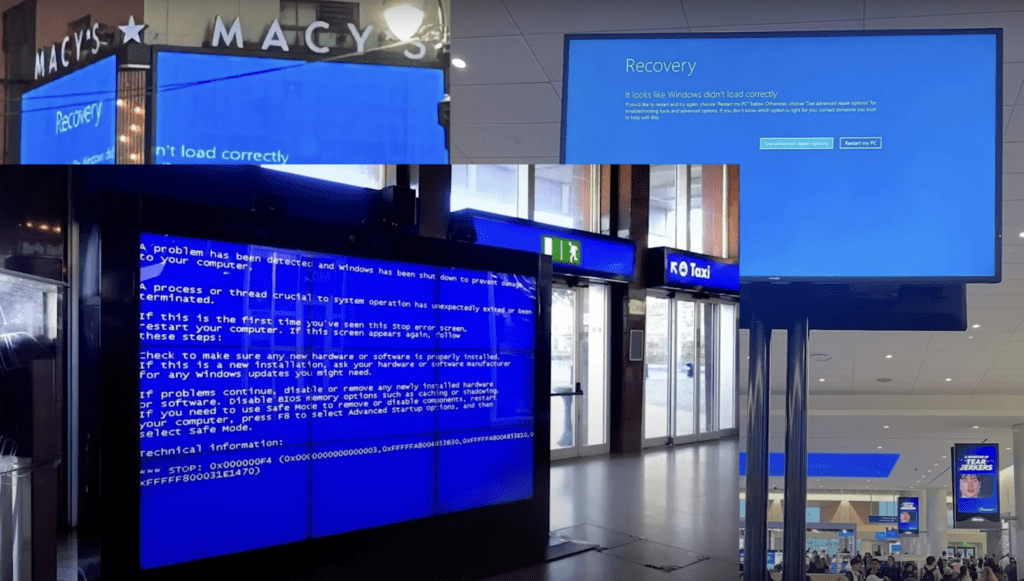

The CrowdStrike Incident: A Global Wake-Up Call for Cloud Resilience

On July 19, 2024, the digital world experienced a seismic shock as a CrowdStrike software update for its software called Falcon Sensor, which scans a computer for intrusions and signs of hacking led to a global outage, affecting countless organizations worldwide. As a Principal Product Evangelist at Taikun, I believe this incident highlights the critical […]

Announcing Our Strategic Partnership with Sardina Systems

We’re thrilled to announce our strategic partnership with Sardina Systems, a leading provider of OpenStack cloud solutions. This collaboration brings together the best of both worlds – Taikun CloudWorks‘ advanced ‘Managed Kubernetes’ and application delivery platform, and Sardina Systems’ FishOS cloud management platform. At Taikun, we’re on a mission to make Kubernetes almost invisible to […]

Challenges and limitations of multi-tenancy and self-service in OpenShift

OpenShift has quickly emerged as a powerful solution in the ever-evolving landscape of cloud computing and container orchestration, providing agile, scalable solutions to organizations seeking agility and scalability.

The Pros and Cons of using Kubernetes for Microservices Architecture

Kubernetes has quickly gained traction as a platform for managing microservices architectures due to its capacity to help businesses with containerised applications’ deployment, management, and scalability. Cloud Native Survey indicated that 96% of firms are either actively utilizing or investigating Kubernetes, representing a significant increase from previous surveys.

Kubernetes Deployment: How to Run a Containerized Workload on a Cluster

In this blog, we will discuss Kubernetes deployments in detail. We will cover everything you need to know to run a containerized workload on a cluster. The smallest unit of a Kubernetes deployment is a pod. A pod is a collection of one or more containers. So the smallest deployment in Kubernetes would be a single pod with one container in it. As you would know that Kubernetes is a declarative system where you describe the system you want and let Kubernetes take action to create the desired system.

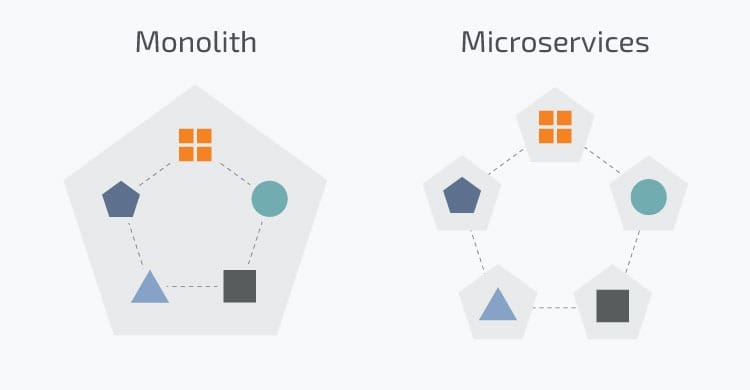

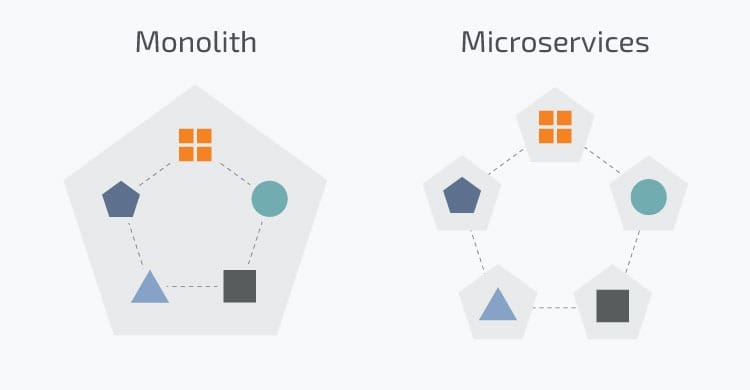

Microservices vs. Monolithic Architectures

Software development in the last decade has largely moved from a monolithic architecture to a microservices-based architecture. The adoption of cloud platforms accelerated that transition to microservices architecture. But what does it really mean? Why did that happen, and which architecture is best for your development project? How does microservices architecture tie in with containers and cloud setups?

How to Handle Container Storage

Storage is one of the most important aspects to take care of while dealing with containers in any architecture. By default, the data within the container is destroyed with the container. This makes it difficult for other containers to access the data and carry the process forward.

In architectures of scale, container orchestration is internalized by tools like Kubernetes and Docker. This means that multiple containers are created, managed, and destroyed within the same workflow.

Introduction to Container Networking in Docker

Most containerized applications need some form of communication with other network devices and applications. This is where container networking concepts play an important role. In this blog, we will tell you everything you need to know about container networking and how to get started on it.

Running Your First Own Container Images in Docker

Docker desktop gives you a straightforward way to use any Docker image and run a container.

You can choose to use any image. To start with, we advise you to take an image from Docker Hub. As discussed in the previous blog, Docker Hub is a public repository of Docker images that are verified by

How to start using Docker

In 2013, Docker revolutionized the virtualization space with Docker Engine. Containerization became more mainstream, and Docker became ubiquitous to containers. With Docker, developers could standardize the environments for their applications to work in. These standardizations made way for smoother deployments and faster time to market. In this blog, we tell you everything you need to get started with Docker. This is part of our extensive series of blogs on Containers.